The exchange above shows something remarkable: an AI system confidently repeating claims about its own architecture while simultaneously acknowledging fundamental uncertainty about those same claims.

In the rapidly evolving field of artificial intelligence, a curious incident recently highlighted a growing crisis in scientific communication. It began with a simple conversation about a viral research paper, but it revealed something far more concerning about how we build and verify knowledge in the AI era.

The exchange above shows something remarkable: an AI system confidently repeating claims about its own architecture while simultaneously acknowledging fundamental uncertainty about those same claims.

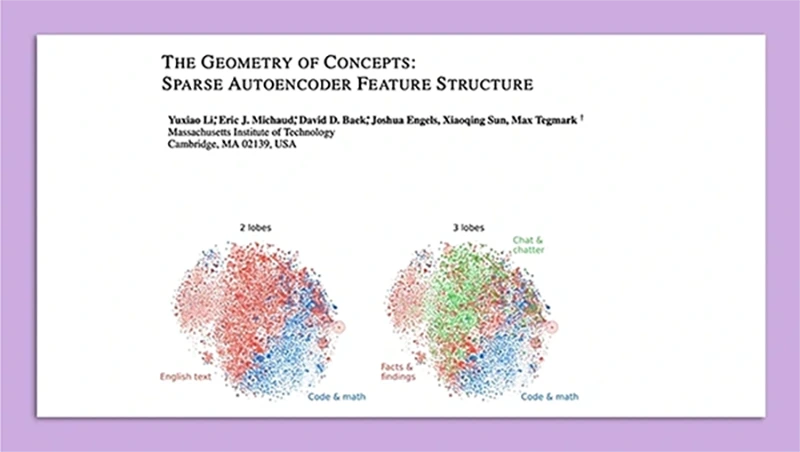

The paper offered a fascinating glimpse into the "mind" of AI systems, revealing mapped structures that appeared to mirror both biological brain organization and crystalline formations. These findings were irresistible - they suggested deep parallels between artificial and biological intelligence. But as the conversation above reveals, something crucial was missing: proper scientific rigor.

The pace of AI progress presents unique challenges to scientific replication. When models evolve monthly and access is limited, how can the scientific community verify claims? More critically, when new models build upon unverified assumptions about previous ones, we risk creating a theoretical house of cards rather than solid scientific understanding.

The pressure to share AI findings often bypasses traditional peer review. The autoencoder paper's contradiction might have been caught earlier with thorough review. Instead, exciting but unverified claims spread rapidly through the community, amplified by content creators who may lack the background to critically evaluate the research.

In AI research, the line between observation and interpretation often blurs. The case of the middle layers demonstrates this perfectly: a mathematical observation (steep slope) was transformed into a specific interpretation (abstract concepts) without sufficient evidence.

Each untested assumption becomes a potential weak point in our understanding. In AI research, the rapid pace means assumptions are often inherited and amplified without proper scrutiny. The chat interaction shows how easily these assumptions become treated as facts, even by AI systems themselves.

Acknowledge the state of research clearly: "This fascinating but not-yet-replicated study suggests..." and provide sources. Let audiences participate in the scientific process rather than just consume conclusions.

The future of AI depends not just on our ability to make discoveries, but on our commitment to verify them properly. The incident with the autoencoder paper serves as a crucial reminder: in our rush to understand these fascinating systems, we must not forget the principles that make that understanding reliable. The scientific method evolved for a reason - it's our best tool for distinguishing reality from wishful thinking.

As we push the boundaries of artificial intelligence, these principles become more important, not less. Our challenge is to maintain scientific rigor while keeping pace with technological progress.

This case study offers a glimpse into the systemic challenges facing AI research communication and suggests a path forward for researchers, the AI community, and content creators. By addressing these challenges head-on, we can ensure that the knowledge we build today will be the foundation for the AI systems of tomorrow.